Overview

Hash cracking is an essential part of pentesting, but it’s resource heavy — running Hashcat on the same machine I’m actively working on slows everything down and ties up the rig. I wanted to offload cracking to a dedicated system I could kick off and walk away from, run overnight, and not think about while I keep working.

I had an i9-13900K and an RTX 2080 Ti sitting from an older build, so rather than buying new hardware I put them to work. This project documents setting up a dedicated cracking VM on Proxmox using PCIe passthrough — configuring IOMMU at the kernel level, binding the GPU to the vfio-pci driver, and passing the 2080 Ti through to an isolated Ubuntu VM running Hashcat.

Infrastructure Context

The cracking station runs as a VM on a Proxmox VE host built from spare parts — an i9-13900K and RTX 2080 Ti from a previous gaming build. I maxed out the RAM with whatever I had on hand, ending up with 128GB. Using a hypervisor rather than bare metal offers:

- Isolation — the cracking workload is fully separated from other lab systems

- Snapshots — clean state before a cracking session, rollback if something breaks

- Resource control — CPU and memory limits prevent the cracking workload from starving other VMs

- Portability — the VM config is declarative and reproducible

The host uses Intel UHD 770 integrated graphics for the Proxmox console, leaving the discrete GPU entirely available for passthrough.

IOMMU Configuration

PCIe passthrough requires IOMMU — Intel’s Virtualization Technology for Directed I/O (VT-d). Without it, the hypervisor cannot safely hand a physical device to a VM.

Enabling IOMMU in the Kernel

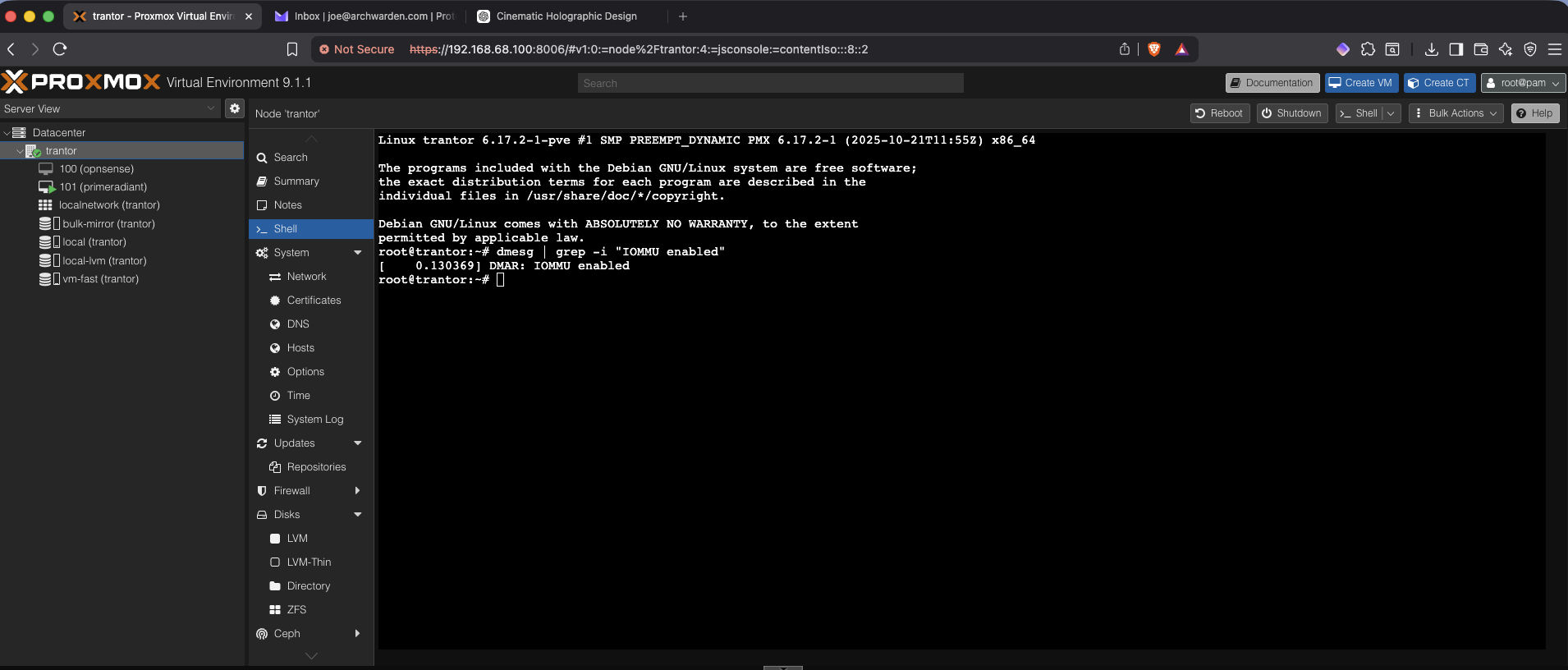

Verified IOMMU hardware support was present but not yet active in the kernel:

dmesg | grep -e DMAR -e IOMMUEnabled it by adding kernel parameters to GRUB:

# /etc/default/grub

GRUB_CMDLINE_LINUX_DEFAULT="quiet intel_iommu=on iommu=pt"iommu=pt (passthrough mode) reduces overhead for devices not being passed through — important on a host running multiple VMs.

After update-grub and reboot, confirmed active:

dmesg | grep -i "IOMMU enabled"

# [ 0.130573] DMAR: IOMMU enabledVerifying IOMMU Grouping

For passthrough to work cleanly, the target device must be in its own IOMMU group — sharing a group with other devices creates passthrough complications.

find /sys/kernel/iommu_groups/ -type l | sort -VThe RTX 2080 Ti and all its functions landed in group 16, isolated from everything else — ideal.

vfio-pci Binding

By default, Linux loads the nouveau or nvidia driver for the GPU at boot. For passthrough, the GPU must be claimed by vfio-pci before any other driver touches it.

Identifying Device IDs

lspci -n | grep "01:00"

# 01:00.0 0300: 10de:1e07 ← GPU

# 01:00.1 0403: 10de:10f7 ← Audio

# 01:00.2 0c03: 10de:1ad6 ← USB controller

# 01:00.3 0c80: 10de:1ad7 ← USB-C UCSIBinding to vfio-pci

echo "options vfio-pci ids=10de:1e07,10de:10f7,10de:1ad6,10de:1ad7" > /etc/modprobe.d/vfio.conf

echo -e "vfio\nvfio_iommu_type1\nvfio_pci\nvfio_virqfd" > /etc/modules-load.d/vfio.conf

update-initramfs -uAfter reboot, verified the binding:

lspci -k | grep -A 2 "01:00.0"

# Kernel driver in use: vfio-pci

VM Configuration

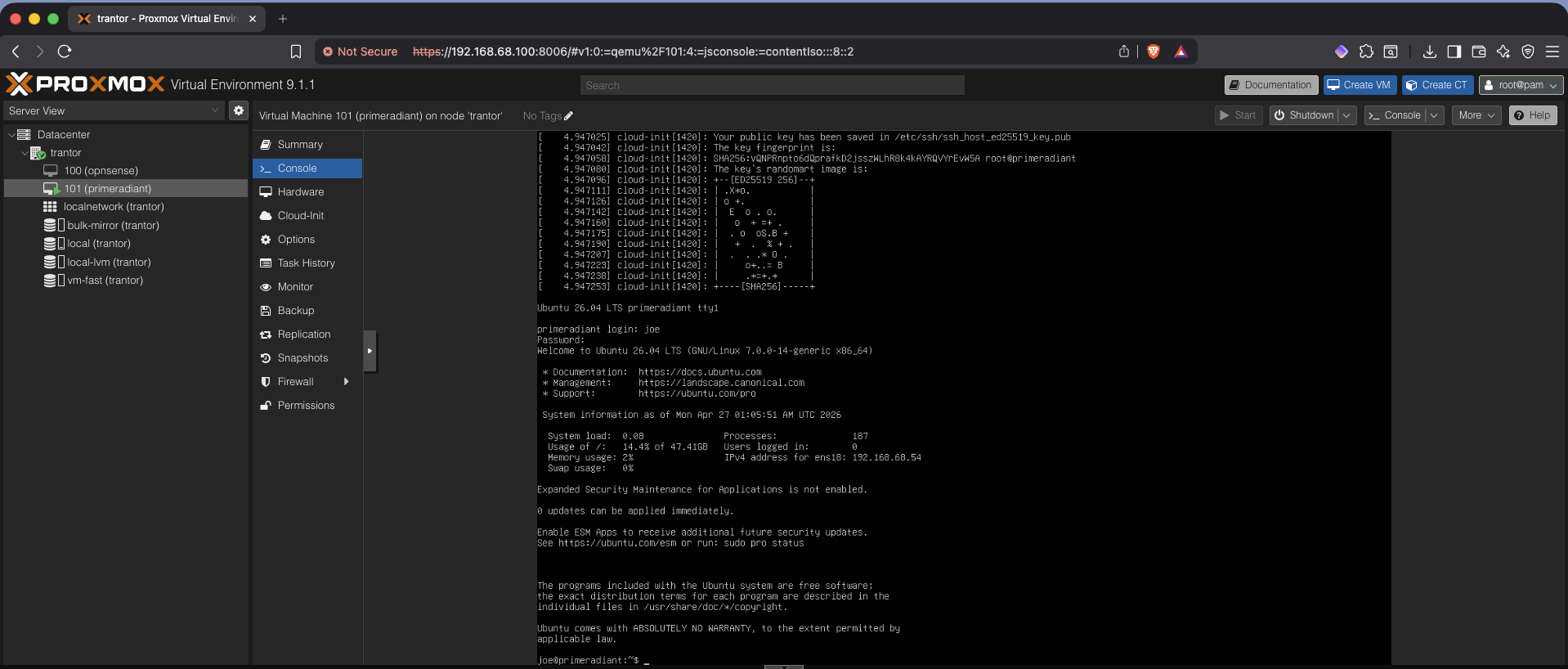

I named the VM primeradiant — a nod to Hari Seldon’s device in Asimov’s Foundation series, the tool he used to model and decode the patterns of the future. Felt appropriate for a machine built to decode things people assumed were locked.

Created a Proxmox VM (Ubuntu Server 26.04) with the following spec:

| Setting | Value |

|---|---|

| Machine type | q35 |

| BIOS | OVMF (UEFI) |

| CPU | 8 cores, host type |

| RAM | 16GB |

| Disk | 100GB on NVMe-backed LVM-Thin |

| Network | VirtIO on vmbr0 |

The host CPU type exposes the full i9-13900K instruction set to the VM — relevant for any crypto operations Hashcat may leverage beyond raw GPU throughput.

Adding the PCI Device

With the VM stopped, added the GPU via Proxmox → Hardware → Add → PCI Device:

- Device:

0000:01:00.0 - All Functions: enabled (passes all four device functions as a unit)

- Primary GPU: disabled (host retains display via iGPU)

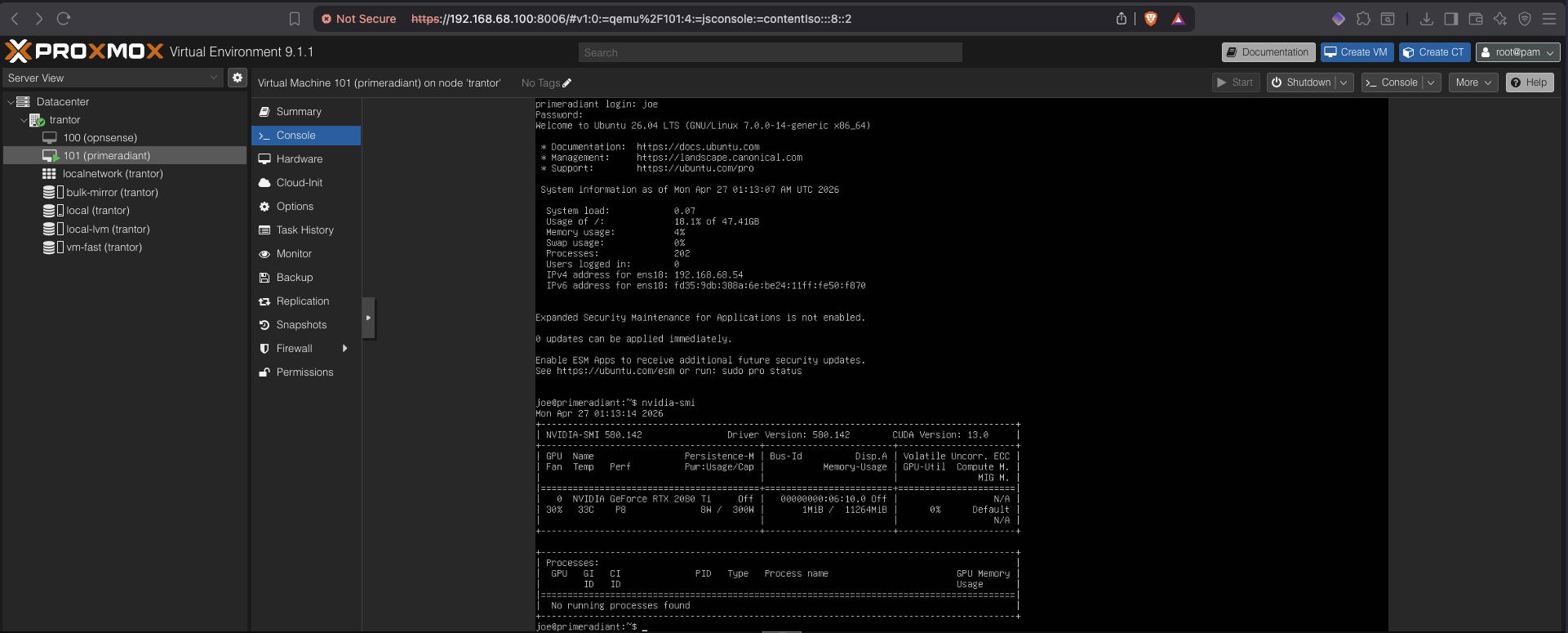

GPU Driver and Hashcat

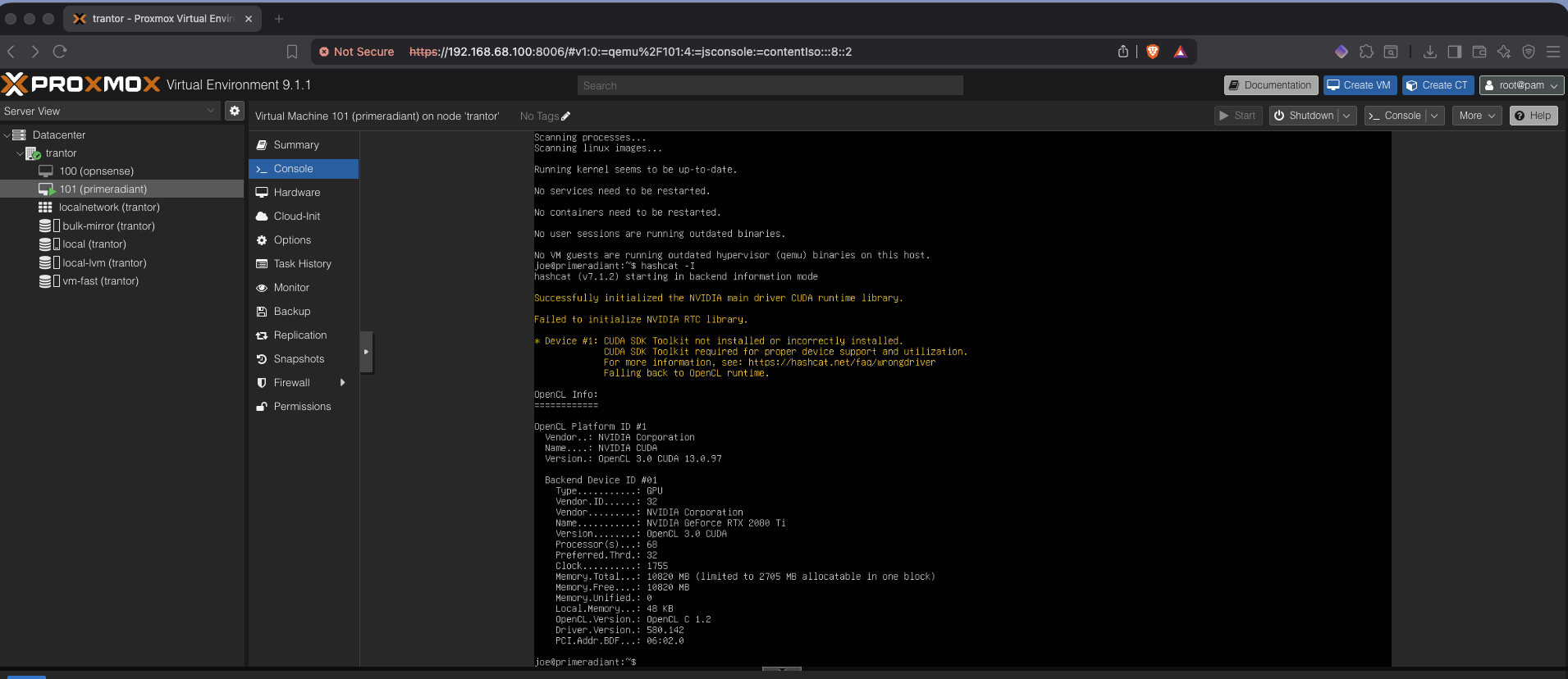

Inside the VM, installed NVIDIA drivers and verified detection:

sudo apt install -y nvidia-driver-535 nvidia-utils-535nvidia-smi

Installed Hashcat and confirmed GPU enumeration:

sudo apt install -y hashcat

hashcat -I

Wordlists

A cracking station is only as good as its wordlists. Installed:

- rockyou.txt — the standard baseline, covers the majority of weak passwords in real engagements

- Kaonashi — large real-world password corpus compiled from breach data, significantly broader coverage than rockyou for complex targets

- SecLists — broader collection covering usernames, common patterns, and targeted lists

Wordlists are stored in ~/wordlists/ and referenced directly in Hashcat commands.

Validation

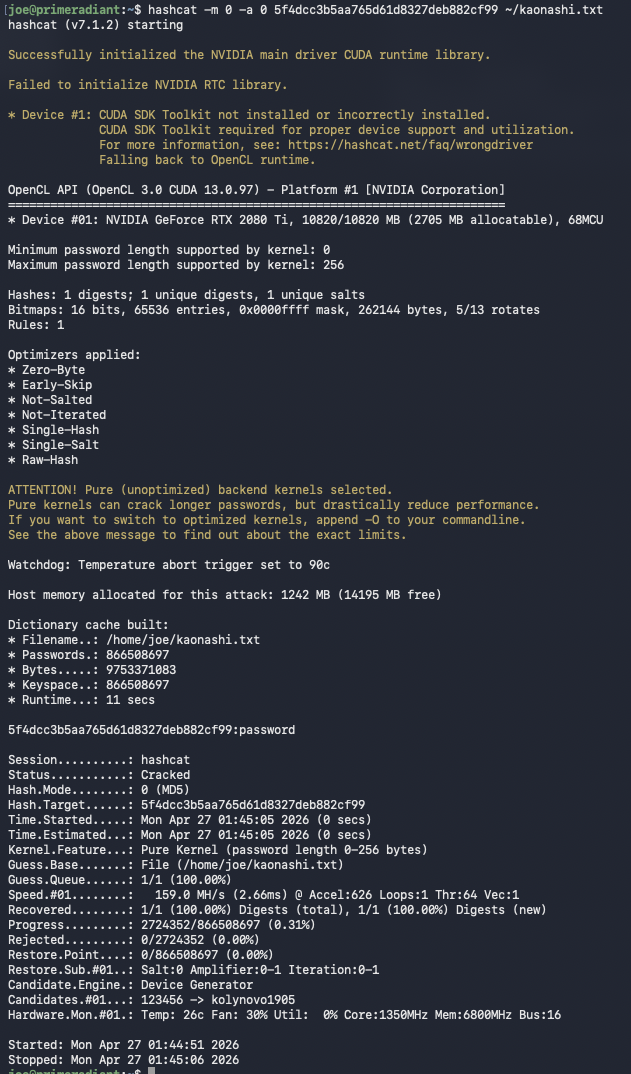

Confirmed end-to-end functionality with a known MD5 hash:

echo -n "password" | md5sum

# 5f4dcc3b5aa765d61d8327deb882cf99

hashcat -m 0 -a 0 5f4dcc3b5aa765d61d8327deb882cf99 ~/wordlists/rockyou.txt

The RTX 2080 Ti delivers strong throughput for common hash modes — MD5, NTLM, bcrypt — making this a capable platform for both CTF and realistic engagement simulation.

Key Takeaways

- IOMMU and vfio-pci configuration is the critical path for GPU passthrough — the kernel must claim the device before any GPU driver loads

- IOMMU grouping matters: a device sharing a group with system-critical hardware cannot be passed through cleanly

- Running the cracking station as a VM rather than bare metal adds meaningful operational value: isolation, snapshots, and clean separation of workloads

- The

iommu=ptflag is worth including on any passthrough host — it reduces overhead for non-passthrough devices